Enterprise Compliance for GitHub Actions Workflows

)

Enterprise compliance platforms are the path forward

April 13, 2026

In my previous consulting roles, I worked with enterprise organizations across financial services, healthcare, automotive, and the public sector. Almost all of them had a CI/CD compliance document. I would know - they hired me to write them!

These documents were incredibly detailed and covered important topics such as:

- defining the procedure to deploy production infrastructure

- required CVE scanning tools and approvals for app deployment

- business unit to cloud resource mappings

- HA and DR requirements

- rollback strategies

After building these document, I would review them with my customer. They were generally very happy - the policies themselves addressed problems that had been plaguing them for far too long. However, this is where things often stopped. Having a policy is important; however, how do you go from corporate automation policy to implementing it across your entire organization?

What Compliance at Scale Actually Requires

Most conversations about CI/CD compliance focus on the specification itself. That's certainly important, but it's only one piece of a much more complex puzzle. To actually achieve compliance at scale, you generally need four things:

- A compliance specification. The document that defines your rules: pin actions to SHAs, use OIDC for cloud authentication, enforce least-privilege token permissions, don't hardcode secrets. Most enterprises have this in some form or another.

- Technical training. Security, DevOps, Platform, Cloud, and Software engineers need to understand how to reconcile their pipelines with the specification. For example, what does "minimize GitHub token permissions" actually look like in a GitHub Actions workflow?

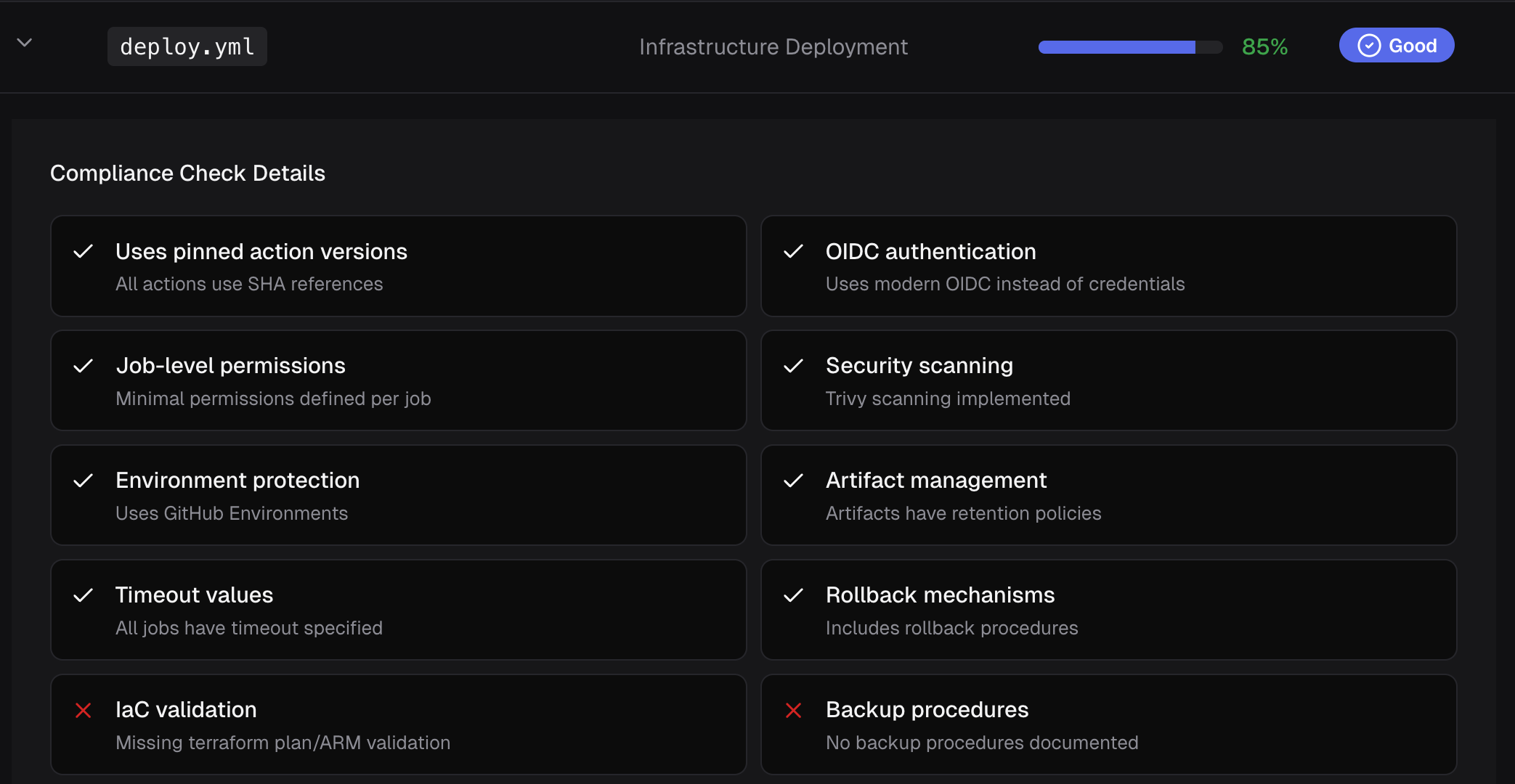

- Validation tooling. After an engineer modifies a pipeline to meet the specification, somebody or something needs to verify the changes are actually correct. This tooling needs to evaluate the pipelines with high accuracy ("right or wrong") and precision ("consistency").

- Reporting and visibility. Leadership, security teams, cloud centers of excellence, and auditors need to see the compliance posture of every pipeline in the organization with some expectation of accuracy. If a pipeline was compliant last week but fails checks this week, they need to know.

Most organizations have #1 (compliance specification). Some provide #2 (training), though rarely with enough depth or frequency to matter. And #3 (validation tooling) and #4 (reporting and visibility) are where things completely fall apart.

The Training Gap

Technical training for your engineers is much more important than organizations think when it comes to pipeline compliance. I've seen organizations distribute a 40-page compliance document to their developers and expect them to just "figure it out." This doesn't work, no matter how talented the engineers are.

For example, which of the following is correct way to submit a GET request to the official npm registry using GitHub Actions?

# Using a GitHub Action- name: Check npm package uses: fjogeleit/http-request-action@v1 with: url: https://registry.npmjs.org/<package-name> method: GET

# Using curl- name: Check npm package run: curl -s https://registry.npmjs.org/<package-name>I don't know, both could be legitimate. Each one does roughly the same thing. If corporate policy is "use the official npm registry to install Node packages," then both are acceptable. However, if corporate policy is "only use verified 3rd party GitHub Actions," then the first option might not be correct.

Why?

The author fjogeleit is not a verified publisher on GitHub. But maybe IT Security has vetted this Action and its acceptable to use? As a Software Engineer who wants to get things right, if I wanted to verify this, I would...

- send a slack message to a buddy on the DevOps team

- they would contact a friend on the Cloud Center of Excellence team

- they would contact someone from the IT Security team

- and that person would spend a week reading through corporate policies to figure out if this was legitimate

After waiting a week or two, I would have my answer for this specific situation. However, as a Software Engineer operating under strict corporate deadlines, I wouldn't do that at all - I would pick option #2 (run

curl) and hope that is good enough.The Validation Problem

Let's assume that every Software Engineer responsible for implementing pipelines has a strong understanding of what's correct/incorrect, AND they also know how to implement fixes. As an organization, if your goal is to have everyone "do things right," then you need a way to validate pipelines are correct.

You can naively do this with your favorite AI agent

"hey AI buddy, evaluatedeploy-k8s-dev.yamlagainst corporate compliance requirements

This technique might yield accurate results. However, where's the "corporate compliance requirements?" How were those distributed? Is your copy current? If 10 people ask their favorite AI agent the same question, will the responses be consistent?

This is the core issue.

You can't achieve compliance at scale if every evaluation is a one-off conversation with a general-purpose AI that interprets your rules differently each time. Even if each Software Engineer uses the same model, agent, and prompt, you need to build a centralized policy engine and distribute the actual rules just-in-time.

The Reporting Problem

Even if you solve validation, you still need to generate reports. Your CISO might ask: "What percentage of our workflows are compliant?" Or your audit team needs evidence for SOC 2. Or your platform engineering lead wants to know which teams are falling behind.

Where does that data come from? If compliance checks are happening in individual AI agent conversations, there's no centralized record. Enterprises need a way to aggregate, analyze, and package the data. Without this, compliance scores are nice to have, but they don't provide any value.

Why This Matters Now

The volume of GitHub Actions Workflows in enterprise organizations is growing fast, and AI coding agents are accelerating it further. Every new workflow that enters your organization without proper compliance validation is a potential risk. Supply chain attacks targeting CI/CD pipelines are happening with increasing frequency: Trivy, LiteLLM, and axios npm are just a few.

CodeCargo is a GitHub Actions workflow compliance platform - we can convert your natural language documents into compliance rules, provide deterministic and AI-assisted evaluations, remediate violations, and provide the reporting your organization needs to be confident. Contact us to book a demo today.

C

CodeCargo Team

The CodeCargo team writes about GitHub workflow automation, developer productivity, and DevOps best practices.

)